Cyber Intelligence

There are no rules, and no certainties

Coleman Kane - @colemankane

https://malware.re/derbycon2018/

/me

- Coleman Kane

- GE Aviation - Principal Technologist for Security Operations

- Completing Ph.D. in Computer Science at University of Cincinnati,

focus in Cyber Operations - Prior work in Software performance/failure analysis, enterprise & embedded software development, heavily Linux and UNIX centric

- Enjoys: family, cat pics, food, good coffee, good beer

What is Intelligence?

Example definitions, borrowed from the pages of The Craft of Intelligence,

by Allen W. Dulles:

Foreknowledge is the reason the enlightened prince and the wise general conquer the enemy whenever they move.

‐ Sun Tzu

Intelligence deals with all the things that should be known in advance of initiating a course of action.

‐ Task Force on Intelligence Activities, 2nd Hoover Commission

Foreknowledge

- In intelligence, we are responsible for collecting and organizing knowledge.

- We are then also responsible for using this knowledge to produce actionable and advisory foreknowledge for the organization.

- This foreknowledge often comes in the form of conclusions.

- Thus, the organization is better informed for taking actions than it would otherwise be without the cyber intelligence organization.

Let's define

Families of Intelligence Data

- Collection

- Recorded observations, facts, or datapoints.

Stored artifacts, documents.

Supporting evidence. - Conclusion

- Recounted observations.

Derived information.

Expectations

Courses of action.

Inference

How intelligence is often perceived at higher levels, unfortunately

- If condition A is true, then condition B will also be true

- However, if condition B is true, then condition A may either be true or false

B is a

problem

,

effect

, or

symptom

, while A is a

cause

.

There may be many causes, some known, some unknown.

Inference Chain

- Given:

- Conclusion:

We known that if A is true, it means that B is true.

We also know that if B is true, that it means that C is true.

Thus, when analyzing both of these artifacts, or data points, we can

conclude a new piece of knowledge, that whenever we know A to be true, we can conclude C

to be true.

Conclusions are our

derived knowledge

. This is often considered the

Intelligence

that is the output of our research.

Probabilistic Inference

How intelligence more often behaves, in practice

- Probability of B being true, if we know A to be true

- Learn this relationship through observation & recording

- Our conclusions will exclusively be based on historic data

Pop Quiz

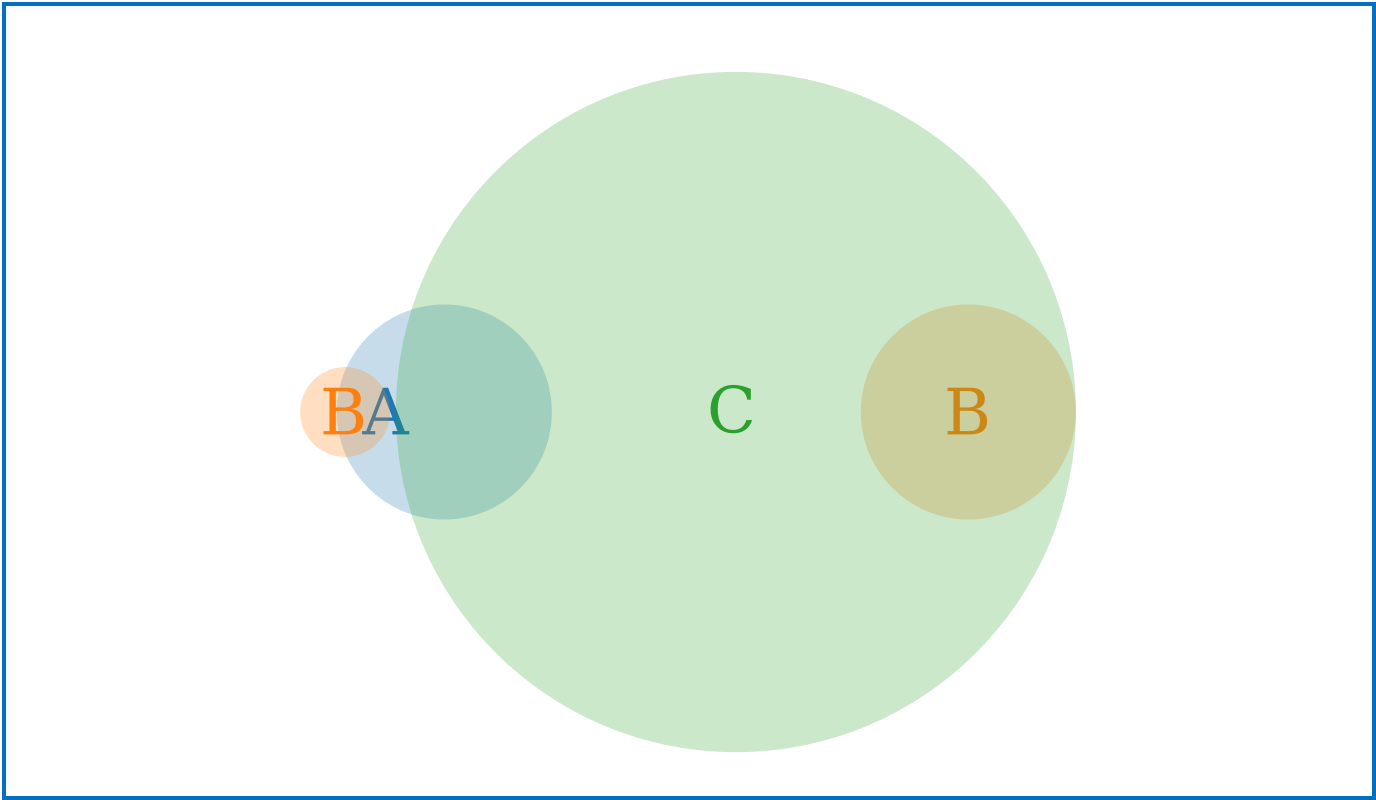

Two values A and B have both been measured as likelihood indicators of C

If we find that A and B are both true, what is the likelihood that C is true?

Trick question?

- Probabilities are not always additive

- Your data set may indicate that is actually evidence that C is false

- It is significantly important to organize observations in a manner that identifies any relationship between A and B, and not simply draw conclusions based upon known relationships to C

,

,

Intelligence in the Cyber Domain

- Knowledge: What has been seen?

- Attack reports

- Vulnerabilities

- Assessments

- Attack surface

- Business operations

- Conclusions: How to respond?

- Signatures

- Watchlists

- Analysis/Findings

- Actions

- Advice

Research cycle: Conclusions are derived from Knowledge, tested, and if they hold up to sufficient testing, reach a confidence threshold at which point they are stored in the knowledge base and treated equally to knowledge, until refuted in a manner that reduces confidence below an accepted threshold.

Models and Frameworks

- Many tools exist to assist with organizing knowledge

- Each have their purposes and limitations

- Simply implementing these doesn't mean you have intelligence

- Choosing not to use these does not imply a lack of intelligence or capability

- You don't need to implement any of them in their entirety

STIX and TAXII

https://oasis-open.github.io/cti-documentation/

- STIX is a structured data schema for describing cyber objects, and their relationships

- TAXII is a standard protocol defined for exchanging information in STIX format

- Summary information - typically absent any narrative, minimal context

- Complex intermediate format that is not human readable

- Standards conformant (JSON, XML) and easily machine parseable

- Knoweldge-centric

- Conclusions are communicated as stored knowledge

- New 2.0 version is a radical change (and an improvement)

Cyber Kill Chain®

https://www.lockheedmartin.com/en-us/capabilities/cyber/cyber-kill-chain.html

- Framework divides an intrusion up into 7 phases

- Common language for describing the sequence of steps adversaries used

- High level framework focuses on the human operations and organization

- is a registered trademark of the Lockheed Martin Corporation

Diamond Model for Intrusion Analysis

http://www.dtic.mil/dtic/tr/fulltext/u2/a586960.pdf

- Focus is for drawing conclusions from knowledge, organizing them into four domains

- Domains: Adversary, Capability, Infrastructure, Victim

- Organizational framework, but not software or a data structure

- Mature and relatively static

MITRE ATT&CKTM

- Focus is for drawing conclusions from your knowledge into a community lexicon

- Community maintained enumeration of common and well-known techniques

- Not comprehensive

- Highly granular

- Newer, moving target

- Full implementation can be very expensive

- Not purely a knowledge base or structure for information exchange

Role of Frameworks

All of these frameworks have a lot of utility, and often come with an implementation cost. Make sure you've identified your need and benefit ahead of implementation.

- STIX / TAXII

-

- Cost: High (to produce), Low (to consume)

- Useful if you anticipate high volume information exchange, or have intelligence providersthat supply information in STIX format.

- Cyber Kill Chain®

-

- Cost: Low

- Nice initial step to breaking down attacks. Useful in particular if you have multiple teams that will handle remediation, investigation, and mitigation work. Organizies event information into what occurred at each phase.

Role of Frameworks (cont.)

- Diamond Model

-

- Cost: Medium

- Very intelligence and adversary-focused analysis. Organizes activity into metadata that can be used to compare events to one another, as well as identify common factors across multiple events

- MITRE ATT&CKTM

-

- Cost: High

- Organize activity into techniques that are used in a finer-grained list of phases than the Cyber Kill Chain. Focus is on detection/prevention management. Attempts to provide an encyclopedia of TTPs. A good place to continue maturation after CKC.

What Frameworks Won't Do

- Organize your intelligence for you

- Automatically identify conclusions for you

- Capture context so you don't have to

- Tell you what your priorities are

- Get done for free (or eliminate work)

These all represent additional effort, above and beyond the basic efforts of documenting events, collecting artifacts, IOCs, signatures, and building detection

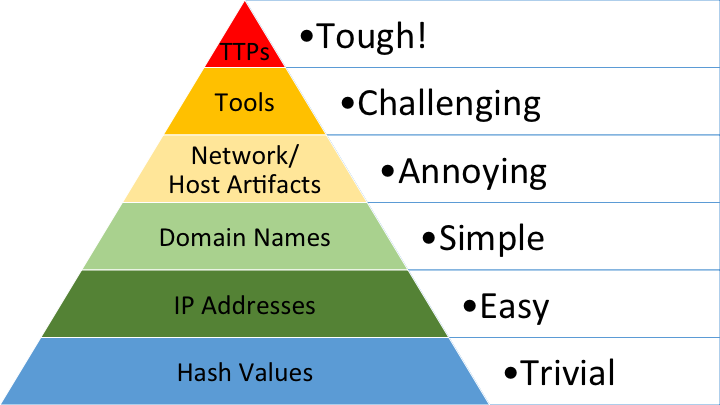

Pyramid of Pain

- Describes adversary investment/cost

- I like to think of it as a maturity model or roadmap

- Don't ignore the bottom of the pyramid for the top!

David Bianco (@DavidJBianco), https://detect-respond.blogspot.com/

Suggestions to get started

- Find a knowledge base that your team can manage

- Build an event-centric storage model for collected incident data

-

Start small

with collecting/building, storing indicators, observations, malware, signatures and relating these to events - Build out what you need as you need it

- Gradually move

up the pyramid (of pain)

, maturing your operations as you grow, through iteration - Recognize that some events may be comprised of multiple attacks or attempts

- Don't try to boil the ocean

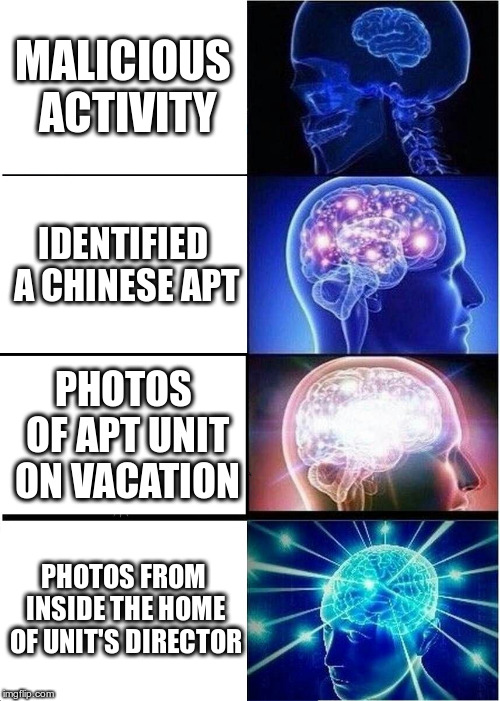

Imagined Attribution

Campaigns, Actors, and Attribution

You don't attribute indicators or signatures. You attribute events to adversaries.- Using the suggested event-centric data model, you can perform simple relational comparisons

- As you mature up the pyramid, you can establish new methods to link events together

- You will be able to gradually build your probabilities using weighted data points

- Rarely are you going to be able to attribute within 24h of an event or report

Not everyone needs to do cyber attribution

Not everyone's conclusions will match.

Knowledge Gaps

- Knowledge gaps should help drive collection & research

- Get good at identifying, recording your knowledge gaps

- Regular communication with stakeholders in the broader team to gather questions (gaps)

- Social engagement beyond security is a necessity

- Gradually grow your engagement out, measured as maturity

- Most importantly, track what they connect back to

Lack of detection coverage is just one type of knowledge gap

Some Other Examples of Knowledge Gaps

- Do you know how Spectre & Meltdown impact you?

- That report on Super Micro came out this week? Do you know what is true/false? If any is true, how could it impact you or your customers?

- Do you know what cloud services your users work in? What are the top 5?

- What services are the most frequently targeted for credential theft?

Action-Driven Intelligence

- When doing research, whether it is malware analysis, infrastructure mapping, or anything else, identify the possible actions that should be taken based upon the range of possible outcomes

- Efforts that don't lead to clear actions should not be your priority

- Doesn't mean not to do them, but you should identify the resource cost vs. immediate benefits

- You're always going to have more leads than resources, so learn to prioritize and focus research

If we know X, we would do Y

Managing Cyber Intelligence

Find places to store & manage your knowledge

- Structured observations and artifacts:

- CRITs https://crits.github.io/ (open-source)

- MISP https://www.misp-project.org/ (open-source)

-

Commercial offerings: ThreatConnect, Anomali, PwC Terrain Intelligence, Soltra Edge

- Unstructured narrative:

- Don't overlook the value of this

- MediaWiki or any other open-source wiki, Confluence, SharePoint, Talla

- Ensure you're mapping consistently across systems

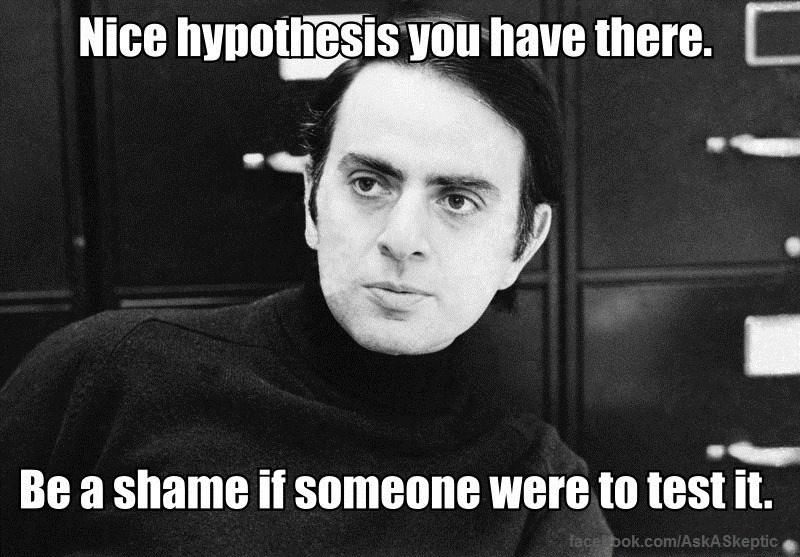

Detection Deployment is a Hypothesis

- Any IOC or Signature you build and deploy is an outstanding hypothesis

- You hypothesize that you're going to detect more future badness after deployment

- This hypothesis needs constant testing

- Adversaries change constantly, just read some of the reports about APT10

- Non-permanency: Detection built today always has a lifespan. Sometimes it is years, sometimes just days

- Recognize there are always some attack(s) you are missing

Hypothesis Testing

http://www.activeresponse.org/15-things-wrong-with-todays-threat-intelligence-reporting/

http://www.activeresponse.org/15-things-wrong-with-todays-threat-intelligence-reporting/

Fidelity Testing

- When building detection, include IOC and signature GUIDs in the alert information

- Log alert dispositions with this information - ElasticSearch and Splunk are great for this

- Do per-GUID analysis for % FP vs. % TP to measure fidelity as well as effort applied (alerting count)

- Low-fidelity is less problematic in lower-frequency alerts, than in higher-frequency ones

- Regular summaries can be generated - informing where to apply effort

Automation

Automate Early, Automate Often

- We are all very resource-constrained, and cannot do everything

- Use automation to help free up resources to mature your operation's growth up the pyramid

- Don't skip the step of doing the work manually in your environment

- Doing something manually first helps inform you of the considerations you'll need in order to move it to automation

Good vs. Bad Intelligence

A common question I'll hear:

Is this a good indicator or signature?

- Question is ambiguous

- Often, is asking if the detection yields a high HP and low FP rate

- Rarely can a source inform you if it will be good for deployment in your own environment.

- Your environment's event log records are key to answer the above question

- Some of you may deal with multiple environments, and this answer may differ across them

- Good or bad is a hypothesis that's up to your team to test

Good vs. Bad Intelligence (cont.)

Other ways to interpret the good vs. bad question

- Do my current sources of intelligence regularly suffice to answer questions for me?

- How often do my sources release information that turns out to be incorrect/conflicted?

- Do I get what I need, such as recommended actions, impacted device types, etc.?

- Is there a way to select among what's pertinent to me?

Review

- Think in terms of inference: knowledge leads to conclusions, data leads to confidence

- Organize: Structured & Unstructured Knowledge Management stores

- Organize: At least relate IOCs, signatures, entities to events

- Compare: Facilitate event-event comparisons

- Focus: Don't be overly concerned with what frameworks you're

missing out on

Review (cont.)

- Prioritize: Identify how to identify what your gaps are, fill these via research

- Grow: Gradually mature your operations, measured by leveraging greater quantities of and more complex intelligence

- Select: Not everything is for everybody, don't fear being selective in what you do/don't apply

- Cycle: It is a cycle. Your work is never complete, your conclusions never permanent

- For every conclusion, recognize that it could be refuted at some point in the future